We all know search engines like Google uses crawlers to visit web pages. By crawling these pages, the search engine index the pages. But it’s not like instant indexing. It depends on many signals to determine either the page will index or not.

We generally audit our website, focusing on basics and technical issues. But we hardly analyze our website crawl budget. Also, many SEO tools can’t check or collect accurate data. But this is where we are making a big mistake.

If we don’t optimize our website crawl budget, then it might lead us into a few difficulties which are –

- Decrease the indexing rate for the whole website.

- Decrease the website crawl budget.

- Adverser impact on ranking and organic traffic.

So what is the crawl budget?

It’s the number stated by Google, which defines how many URLs Googlebot can crawl for each specific website. It depends on three factors: The crawl rate, crawl response, and the crawl demand.

- The Crawl Rate – It’s a no. of connection Googlebot may use to crawl the site and also the differences between each crawl.

- The Crawl Response – It depends on the website or host response time. If the site responds quickly and efficiently to Googlebot, then it will increase the crawl rate.

- The Crawl Demand – If you don’t have content or whenever you are adding content but not having a content linked to, then the demand to crawl for Googlebot will reduce. Also, content which is not creating any value for the user or not updated for a long time will lose the demand for crawling. So popularity and freshness playing a vital role in the crawl demand.

So what’s my crawl status?

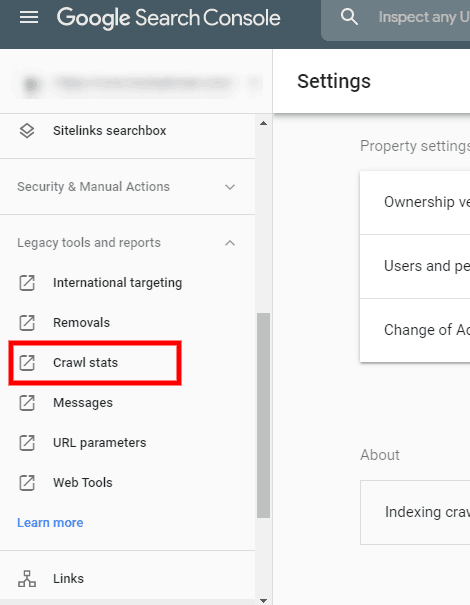

So obvious question arisen in our mind is what’s my website crawl status. You can quickly check it via Google Search Console. Check the screenshot to find out where to check exactly in GSC.

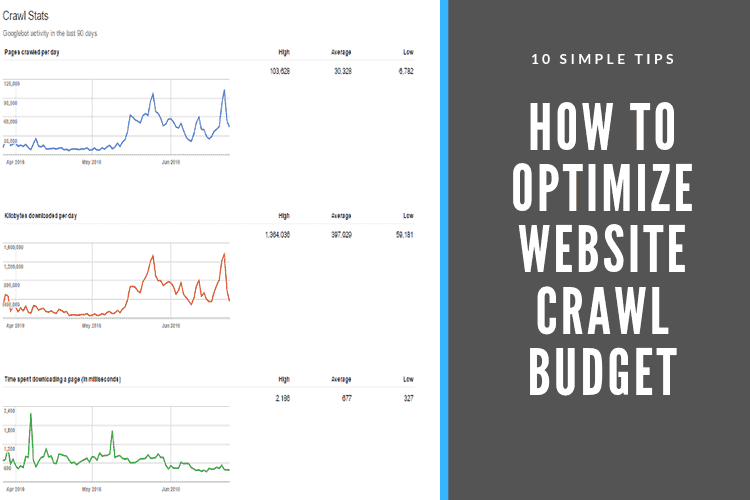

So you can see the last 90 days data on how your site has been crawled. If you see a stable graph, that means your website is performing well to the Googlebot. If you see a drop in the graph, that means your website badly needs to optimize for its crawl budget.

How to optimize a website crawl budget?

There are few simple tips to follow for optimizing a website crawl budget.

- Proper Navigation

Setting up proper navigation will help to increase the crawl rate. A scattered menu that drives the Googlebot to the unnecessary pages or single pages multiple times will cause a decrease in crawl rate.

- Robot.txt File

We all said Robot.txt file is the lord of the bots. This file is the primary directives for the Googlebot. It will follow what is written or shown in the robot.txt file. I know we all are aware of how robot.txt file works. But still, if you are new into this, then check out this guide for further reading.

- Sitemap.xml

A sitemap is the 2nd best way to discover the post and pages for Googlebot. So adding a sitemap to the Google search console and keep updating it is the best practice.

- Using Hreflang Tags

If we have a localized version of your website, we should indicate it for Googlebot. So using Hrefland tags is essential to make our pages identical for Googlebot.

- HTTP Errors

Most of the time, 404 and 410 pages ruin the crawl budget for a website. So it’s the best practice to fix all 4xx and 5xx status code you found on your website. You can use a Website Auditor or Screaming frog or any other tools to find these errors.

- URL Parameter

Please note, Googlebot spends a crawl budget for each of the specific URLs. So setting and leaving unnecessary pages will decrease the crawl budget. Block or remove the pages you don’t need, specifically demo pages. Make sure to add the URL correctly in the GSC.

- Excessive Internal Linking

Sometimes we do excessive internal linking for passing link juice all over the pages. But again, it hurt the crawl rate. Unnecessary crawling will be an issue to reduce your crawl budget. Make sure to interlink whenever it necessary.

- Website Speed

We all know improving website speed is one of the key points for ranking. Bad server response and log time will do nothing but reduce the crawl budget. Website speed has a direct connection with the crawl budget. So make sure your website loads speedily.

- Fresh Content

If you add fresh and new content regularly, that will make the Googlebot revisit the website. It will help to increase the crawl budget for your website. Try not to use any duplicate content. Make sure to audit your old and new content regularly.

- Redirection

If you have a big website, then it’s tough to maintain the redirection chain. But this lousy redirection will cause the reduction of crawl rate and budget. Make sure to fix all the bad redirection chains and 404 errors to avoid it.

Crawl Budget Optimization is Not Easy

Remember, it’s not as easy or simple as it read. If you are not good at technical SEO, then better leave it to the expert hand. You can hire an SEO expert in Bangladesh to take care of the issues of your website.

Wrapping it Up

So all we learn that optimizing crawl budget is an essential point for our website. We all do technical SEO but hardly notice or point out over it. But it’s one of the significant ranking signals for Google. So let’s check and start optimizing now.

3 Responses

This is my first time pay a quick visit at here and I am actually happy to read all at one place. It’s an important point which we all miss. Thanks for pointing out the tips about it.

After a long time, I found my favorite article that I was seeking. Thank you so much.

Really informative blog. Many thanks again.